Liam Baum : Sonic Pi DrumRNN GUI

Sonic Pi DrumRNN GUI

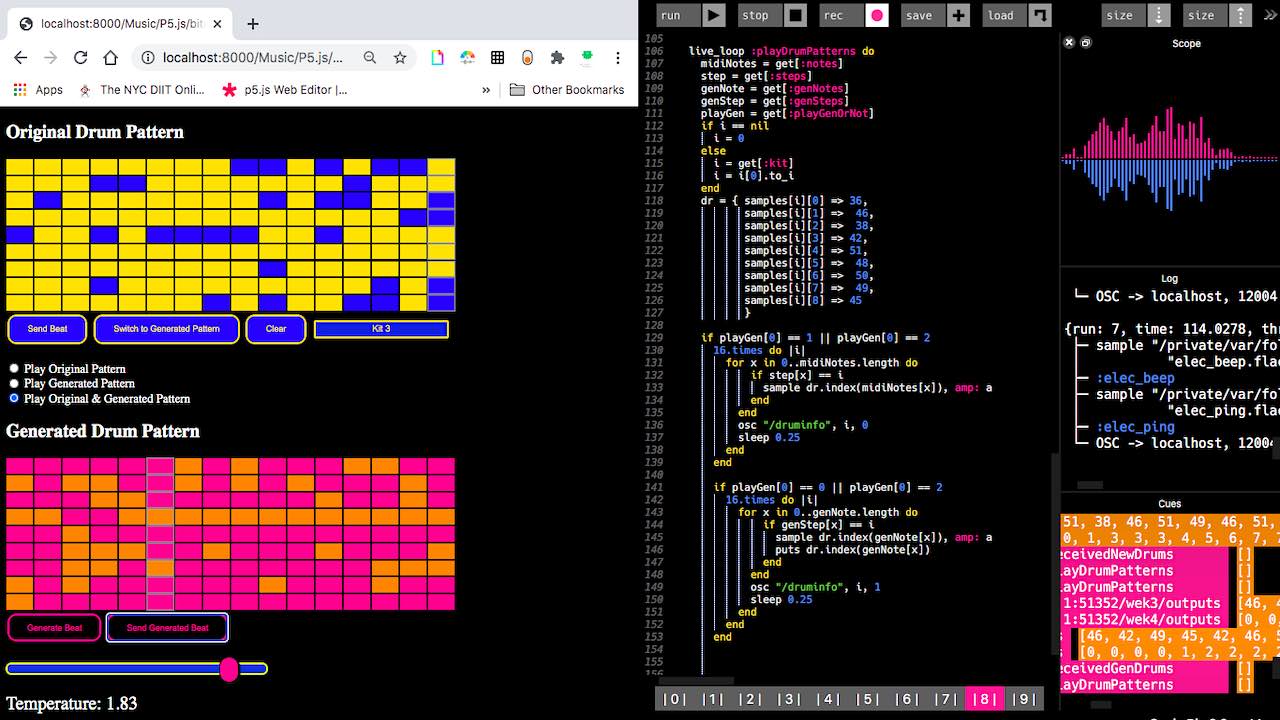

This project uses a Machine Learning model to generate extensions of a drum pattern. The patterns can be created in the Grid interface and are then sent into Sonic Pi. The changes in drum patterns can be changed and updated in real time to be used as part of a live coding performance.

This project was created as a way to incorporate machine learning into a live coding performative context. It aims to make the collaborative process between musician and machine learning model happen in real time in an ongoing feedback cycle where the machine makes output based on the human and then the human can in turn react to the model output, creating an ongoing interactive loop that takes place within the context of a performance.

This project was created as a way to incorporate machine learning into a live coding performative context. It aims to make the collaborative process between musician and machine learning model happen in real time in an ongoing feedback cycle where the machine makes output based on the human and then the human can in turn react to the model output, creating an ongoing interactive loop that takes place within the context of a performance.

Frequently Asked Questions

What inspired you to do this?

I was looking for a way to incorporate machine learning into some sort of interactive scenario where I could create generative output during a live performance and react to it in real time, essentially making it a collaborative performance between a person and a machine learning model.

How long did it take to make it?

The original version I made of this probably took about a week to code before I got a working prototype. I used that version for a while. I entered into a hackathon for machine learning and music and decided it was a good opportunity to try and improve upon the design, so it prob took another two weeks of coding to get the version I have now.

How long have you been doing things like this?

I've been working with Sonic Pi for almost 5 years and started messing around with machine learning in a musical context about 2 years ago.

How much did this cost to do?

100% open source. Didn't cost me anything but time.

Have you done other things like this?

I have made some other machine learning music projects but nothing that is interactive.

What did you wish you knew before you started this?

I had an earlier version of this project where I was able to make drum patterns in Sonic Pi then send them into the DrumRNN model and have them returned to Sonic Pi. It was a bit cumbersome having to go back and forth from Sonic Pi and the code for the original drumbeats was hard to keep track of. I also had to rely on data logged to the console to get any sense of what the output was like besides hearing it. The GUI made it much easier to use, so I wished I had been aware of the NexusUI library when I first started this.

Are there plans available to make this? Do you sell this?

See the Github for all the code: https://github.com/mrbombmusic/sonic-pi-drum-rnn-gui

What’s next?

I would like to try and do some live performances with it.

I have done a couple of live streams.

Session 1: https://youtu.be/Wi4WUCyiJ3w

Session 2:https://youtu.be/qgZqiyipBuk

I have done a couple of live streams.

Session 1: https://youtu.be/Wi4WUCyiJ3w

Session 2:https://youtu.be/qgZqiyipBuk

Resoures?

Sonic Pi: https://sonic-pi.net/

Magenta.js intro: https://hello-magenta.glitch.me/

p5js OSC library: https://github.com/genekogan/p5js-osc

NexusUI: http://nexus-js.github.io/ui/

Magenta.js intro: https://hello-magenta.glitch.me/

p5js OSC library: https://github.com/genekogan/p5js-osc

NexusUI: http://nexus-js.github.io/ui/

Liam Baum

: Musician / Educator / Creative Coder / Tinkerer

Queens, NYC

I am a musician and educator who teaches at a public middle school in Queens NY. I have spent the past several years exploring how coding and technology can be incorporated into creative music making experiences. I work with coding and hardware to make musical instruments and interfaces that can be played in the physical world as well as strictly code based projects that can used as instruments.

I do this as a hobby but also have found ways to bring the experience of creative coding and physical computing to my students. I also have led several workshops both in person and virtually about combining music with coding and technology using different languages and hardware.

I do this as a hobby but also have found ways to bring the experience of creative coding and physical computing to my students. I also have led several workshops both in person and virtually about combining music with coding and technology using different languages and hardware.

Connect with Liam Baum

How I can help you:

I am an educator and musician who is happy to answer questions in regards to music and coding.

There is also a detailed github repo for this project so others can try and make it themselves.

I am an educator and musician who is happy to answer questions in regards to music and coding.

There is also a detailed github repo for this project so others can try and make it themselves.

How you can help me:

Check out my youtube channel.

Follow me on twitter.

Book me for a workshop.

Check out my youtube channel.

Follow me on twitter.

Book me for a workshop.

Become a member to post comments or questions to this maker.

Here's a shareable link to this project: https://makermusicfestival.com/projectdirectory/sonic-pi-drumrnn-gui/

The information and media presented for this project was provided by its creator. The maker owns and has copyright on all materials submitted. The maker retains all intellectual property rights, including copyright, patents, and registered designs.